The 8th edition of the Machine Learning and Knowledge Engineering (MAKE) symposium, held from April 7 to April 9, 2026, brought together researchers and practitioners to explore the evolving interplay between machine learning, knowledge representation, and reasoning. This year’s focus on knowledge-grounded semantic agents reflected a growing consensus: the future of AI lies not in scaling models alone, but in integrating learning with structured, interpretable, and controllable representations.

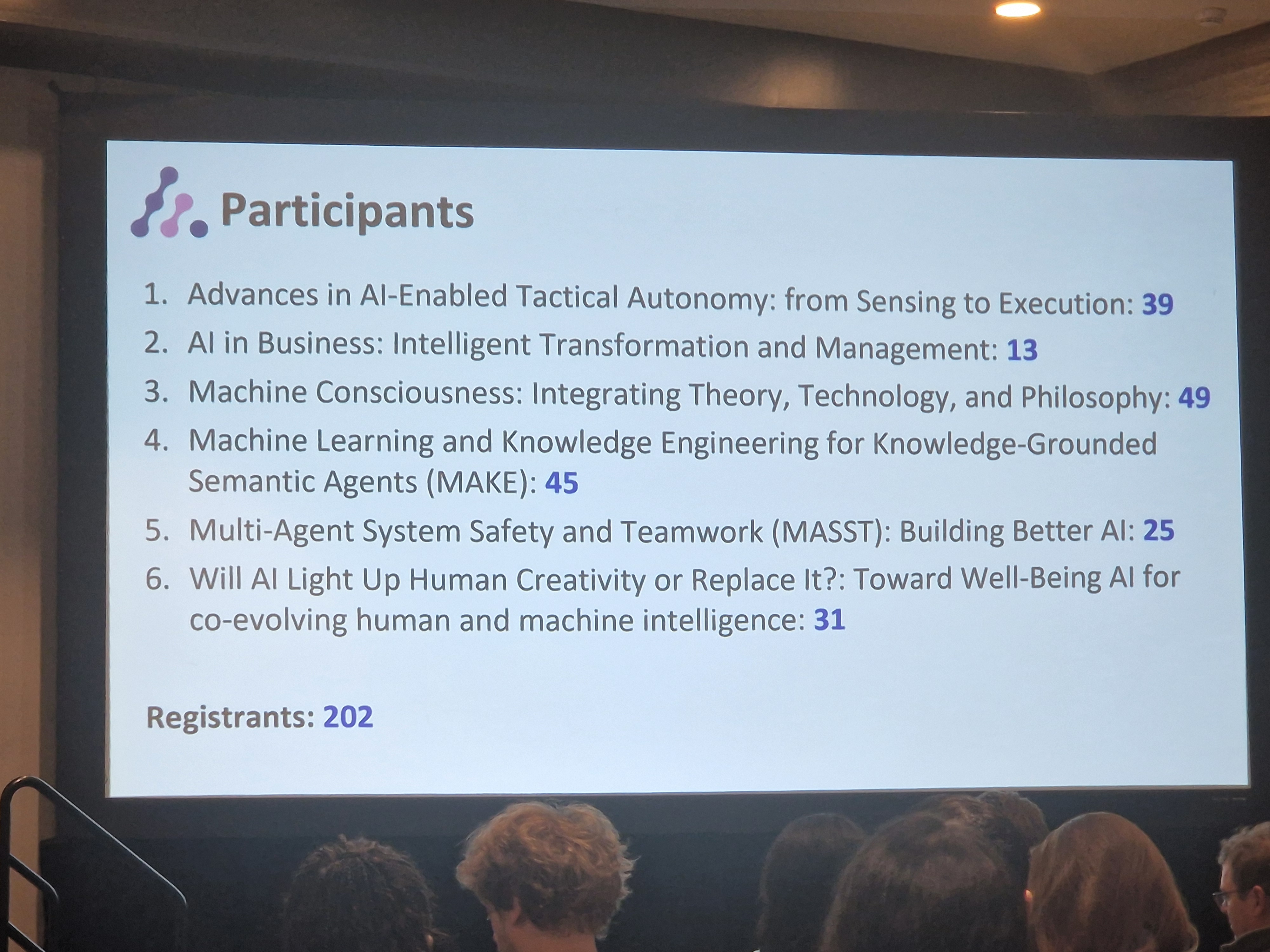

Across three days, the symposium featured 27 accepted papers and 3 posters, continuing the trajectory of the series, which has now hosted over 225 publications since its inception in 2019.

From Models to Systems: The Keynote Perspective

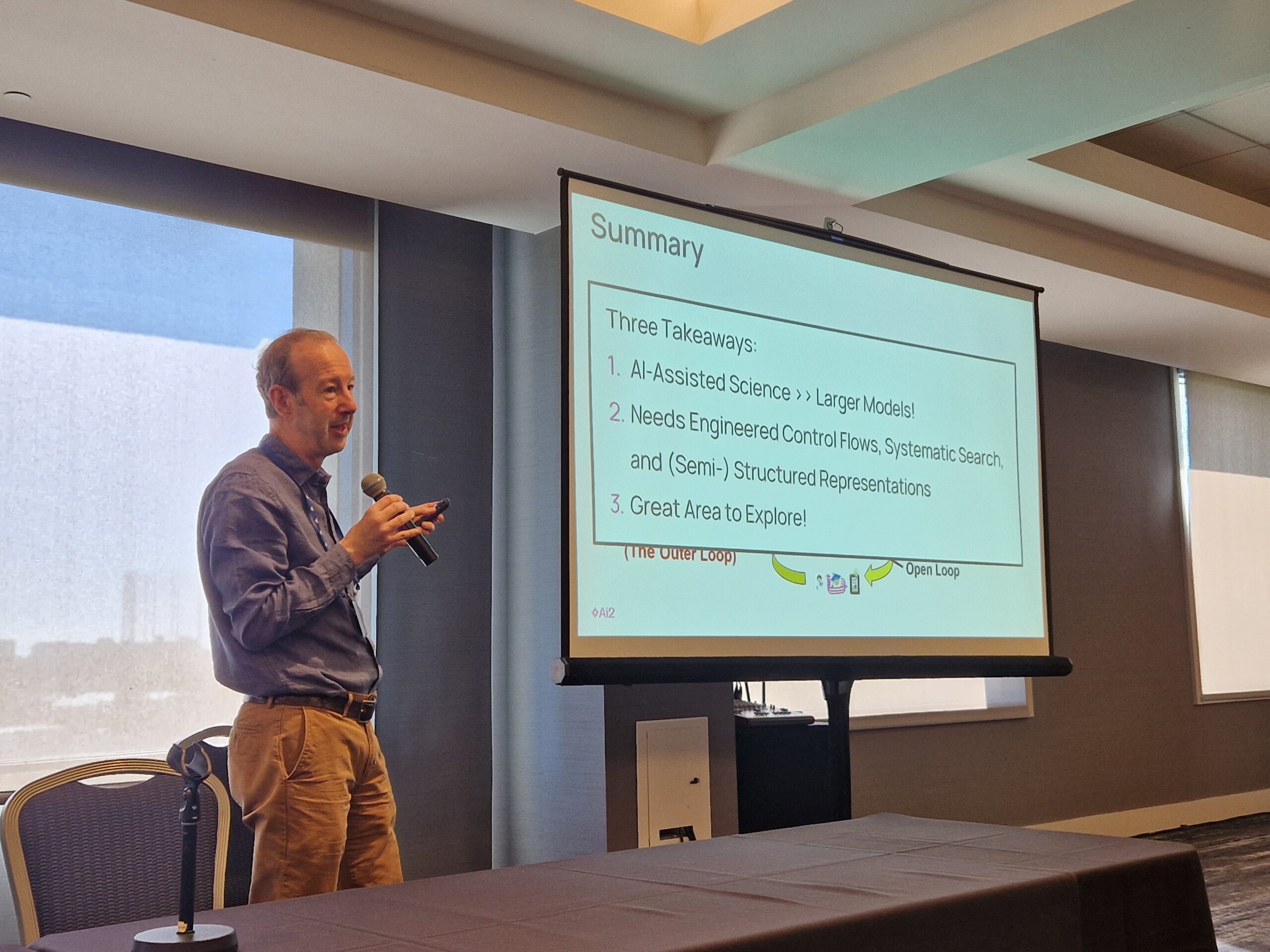

A central highlight was the keynote by Peter Clark (Allen Institute for Artificial Intelligence). In his talk, “Building Discovery Machines: Systematic Search, Structured Knowledge, and the Outer Loop of Long-Horizon Research Agents,” he argued for a shift in perspective from executing isolated tasks to designing systems capable of sustained scientific inquiry.

Clark introduced the distinction between the inner loop of experimentation and the outer loop of research, where hypotheses are generated, revised, and systematically explored. His work on the Asta project demonstrated that long-horizon research agents require explicit representations of both knowledge and research state. Rather than relying solely on increasingly large models, effective discovery systems must combine language models with structured knowledge and systematic search.

This keynote set the tone for the symposium: hybrid architectures are not a workaround but a necessary design principle.

Hybrid AI in Practice: Bridging Learning and Reasoning

A recurring theme throughout the symposium was the integration of neural and symbolic methods, often framed as hybrid or neuro-symbolic AI. Contributions spanned multiple levels of abstraction, from theoretical frameworks to deployed systems.

Several works demonstrated how augmenting large language models with structured components improves reliability and performance. For example, knowledge graphs were used to evaluate and constrain agent decisions in high-stakes scenarios such as search and rescue. Similarly, semantic scene graphs enabled robotic systems to better interpret and navigate complex environments.

Another line of work explored architectural patterns for agent design. Simple but principled control structures such as plan-act-monitor loops were shown to significantly enhance performance across domains including robotics, scientific discovery, and drug development. Likewise, introducing explicit state representations and typed interfaces for tool use improved robustness compared to stateless agent designs.

These findings collectively reinforce a key insight: structure, not scale alone, is critical for dependable AI systems.

Formalization, Interpretability, and New Representations

The challenge of translating natural language into formal representations emerged as a central research question. Numerous contributions addressed how meaning can be captured in structured, machine-interpretable forms.

One notable example explored the formalization of humor using first-order logic, illustrating both the potential and the limits of symbolic approaches in capturing nuanced human concepts. Other work proposed labeled logic programs as a unifying reasoning framework, aiming to simplify the integration of formal reasoning into modern AI systems.

Beyond logic-based approaches, researchers also investigated alternative computational paradigms. Compositional function networks, for instance, replace neurons with interpretable mathematical functions, offering a promising direction for achieving both performance and transparency.

An additional perspective discussed during the symposium was the inversion of standard architectures. Instead of augmenting neural systems with symbolic components, some researchers advocated for symbolic-first systems that leverage language models primarily for data acquisition and preprocessing. This perspective highlights the increasing flexibility in how hybrid systems can be designed.

Applications Across Domains

The symposium showcased applications across science and engineering, including healthcare, chemistry, robotics, and manufacturing. Contributions demonstrated how hybrid approaches can improve diagnostic reasoning, enable safer conversational systems, and support scientific workflows.

Work on combining recurrent neural networks with large language models showed improved performance in order-sensitive domains, while advances in semantic watermarking and safety-aware dialogue systems addressed concerns around trust and reliability.

Poster presentations complemented the main sessions, highlighting emerging directions such as affordance learning from language models and more nuanced evaluation strategies for retrieval-augmented generation.

Community and Outlook

The symposium was chaired by Andreas Martin and supported by an international team of organizers and session chairs. Session chairs included Jane Yung-jen Hsu, Leilani H. Gilpin, Pedro A. Colon-Hernandez, Peter Clark, Reinhard Stolle, Thomas Schmid, and Yen-Ling Kuo. The plenary session featured Jingying Yang, who synthesized the discussions and highlighted the unifying theme of translating complex real-world phenomena into structured representations for trustworthy AI.

The symposium was organized by Andreas Martin, Pedro A. Colon-Hernandez, Maaike de Boer, Hans-Georg Fill, Aurona Gerber, Leilani H. Gilpin, Pascal Hitzler, Jane Yung-jen Hsu, Yen-Ling Kuo, Emanuele Laurenzi, Alessandro Oltramari, Thomas Schmid, Paulo Shakarian, Reinhard Stolle, and Frank van Harmelen.

In addition, authors of accepted papers were invited to submit extended versions of their work to a dedicated special issue of the Neurosymbolic Artificial Intelligence Journal, fostering continued development and consolidation of the research presented at the symposium.

Beyond individual contributions, MAKE 2026 emphasized a broader research agenda. Discussions pointed toward several emerging directions, including memory and reasoning, metacognitive agents, human-AI interaction and communication, world models, and human symbolic neural networks.

The symposium concluded with a shared recognition that advancing AI requires not only better models but also better integration of learning, reasoning, and knowledge. By continuing to bridge these paradigms, the MAKE community plays a central role in shaping the next generation of intelligent systems.

This report was written by symposium chair and co-organizer Andreas Martin and plenary representative Jingying Yang.